What Americans Need to Know About the 2025 Tax Law Changes

As 2025 tax season begins, a wave of new tax law changes is rolling in—and for millions of Americans, understanding these updates is crucial for smart financial planning. Whether you’re a wage earner, a small business owner, or preparing for retirement, here’s a breakdown of the most important shifts in the U.S. tax landscape this year. 1. The Standard Deduction Has Increased The IRS has raised the standard deduction again to adjust for inflation. For the 2025 tax year: Single filers can now deduct $15,000, up from $13,850 in 2024. Married couples filing jointly can deduct $30,000, up from $27,700. Heads of household can deduct $22,500. This change means fewer people may itemize their deductions, simplifying the filing process for many. 2. Child Tax Credit Adjustments The Child Tax Credit has been updated to provide continued support to families. For 2025: The maximum credit is $2,000 per qualifying child under age 17. The refundable portion (Additional Child Tax Credit) is up to $1,700. Phase-out thresholds begin at $200,000 for single filers and $400,000 for joint filers. 3. Changes for Gig and Freelance Workers Self-employed individuals and gig economy workers should take note of 1099-K reporting thresholds: The reporting threshold for third-party platforms (like Venmo, Etsy, or Uber) is $5,000, delayed from the originally planned $600 implementation. More people will receive 1099-K forms and must report that income. The IRS has also released updated guidance on how to categorize and deduct expenses related to freelance work. 4. Retirement Contribution Limits Have Increased To help Americans save more for retirement, contribution limits have gone up: 401(k): You can now contribute up to $23,500. Catch-Up (Age 50+): Additional $7,500. Special Catch-Up (Ages 60–63): Additional $11,250. IRA: The limit remains at $7,000 for those under 50, and $8,000 for those 50 and older. These increases are part of the Secure 2.0 Act changes. 5. EV and Green Energy Incentives Expanded If you’re going green in 2025, the tax code has some perks for you: Electric vehicle (EV) credits offer up to $7,500 for new EVs. Used EVs may qualify for up to $4,000, or 30% of the sale price. Home energy upgrades such as solar panels, efficient windows, and heat pumps may qualify for credits up to 30% of the cost. 6. Capital Gains and Investment Income While capital gains tax rates remain unchanged, the income thresholds have increased: 0% rate: Applies to taxable income up to $47,025 (single) or $94,050 (married filing jointly). 15% rate: Applies to income between $47,025 and $518,900 (single) or $94,050 and $583,750 (joint). 20% rate: Applies to income above those thresholds. The Net Investment Income Tax (NIIT) of 3.8% still applies to individuals earning more than $200,000 and couples over $250,000. 7. Expiring Provisions to Watch Several provisions from the 2017 Tax Cuts and Jobs Act (TCJA) are set to expire after 2025. Though these changes aren’t effective yet, experts suggest planning ahead: Individual income tax rate reductions Doubling of the standard deduction Increased estate tax exemption If Congress doesn’t act, many Americans could see higher tax bills in 2026. Navigating tax season can be tricky, but staying informed is half the battle. With these 2025 tax changes in mind, now’s the time to adjust your withholdings, revisit your deductions, and make sure you’re not leaving money on the table. As always, consult a trusted tax professional for personalized advice. Stay tuned to Readovia for more essential financial updates and smart money insights all year long.

MIT students’ works redefine human-AI collaboration

Imagine a boombox that tracks your every move and suggests music to match your personal dance style. That’s the idea behind “Be the Beat,” one of several projects from MIT course 4.043/4.044 (Interaction Intelligence), taught by Marcelo Coelho in the Department of Architecture, that were presented at the 38th annual NeurIPS (Neural Information Processing Systems) conference in December 2024. With over 16,000 attendees converging in Vancouver, NeurIPS is a competitive and prestigious conference dedicated to research and science in the field of artificial intelligence and machine learning, and a premier venue for showcasing cutting-edge developments. The course investigates the emerging field of large language objects, and how artificial intelligence can be extended into the physical world. While “Be the Beat” transforms the creative possibilities of dance, other student submissions span disciplines such as music, storytelling, critical thinking, and memory, creating generative experiences and new forms of human-computer interaction. Taken together, these projects illustrate a broader vision for artificial intelligence: one that goes beyond automation to catalyze creativity, reshape education, and reimagine social interactions. Be the Beat “Be the Beat,” by Ethan Chang, an MIT mechanical engineering and design student, and Zhixing Chen, an MIT mechanical engineering and music student, is an AI-powered boombox that suggests music from a dancer’s movement. Dance has traditionally been guided by music throughout history and across cultures, yet the concept of dancing to create music is rarely explored. “Be the Beat” creates a space for human-AI collaboration on freestyle dance, empowering dancers to rethink the traditional dynamic between dance and music. It uses PoseNet to describe movements for a large language model, enabling it to analyze dance style and query APIs to find music with similar style, energy, and tempo. Dancers interacting with the boombox reported having more control over artistic expression and described the boombox as a novel approach to discovering dance genres and choreographing creatively. A Mystery for You “A Mystery for You,” by Mrinalini Singha SM ’24, a recent graduate in the Art, Culture, and Technology program, and Haoheng Tang, a recent graduate of the Harvard University Graduate School of Design, is an educational game designed to cultivate critical thinking and fact-checking skills in young learners. The game leverages a large language model (LLM) and a tangible interface to create an immersive investigative experience. Players act as citizen fact-checkers, responding to AI-generated “news alerts” printed by the game interface. By inserting cartridge combinations to prompt follow-up “news updates,” they navigate ambiguous scenarios, analyze evidence, and weigh conflicting information to make informed decisions. This human-computer interaction experience challenges our news-consumption habits by eliminating touchscreen interfaces, replacing perpetual scrolling and skim-reading with a haptically rich analog device. By combining the affordances of slow media with new generative media, the game promotes thoughtful, embodied interactions while equipping players to better understand and challenge today’s polarized media landscape, where misinformation and manipulative narratives thrive. Memorscope “Memorscope,” by MIT Media Lab research collaborator Keunwook Kim, is a device that creates collective memories by merging the deeply human experience of face-to-face interaction with advanced AI technologies. Inspired by how we use microscopes and telescopes to examine and uncover hidden and invisible details, Memorscope allows two users to “look into” each other’s faces, using this intimate interaction as a gateway to the creation and exploration of their shared memories. The device leverages AI models such as OpenAI and Midjourney, introducing different aesthetic and emotional interpretations, which results in a dynamic and collective memory space. This space transcends the limitations of traditional shared albums, offering a fluid, interactive environment where memories are not just static snapshots but living, evolving narratives, shaped by the ongoing relationship between users. Narratron “Narratron,” by Harvard Graduate School of Design students Xiying (Aria) Bao and Yubo Zhao, is an interactive projector that co-creates and co-performs children’s stories through shadow puppetry using large language models. Users can press the shutter to “capture” protagonists they want to be in the story, and it takes hand shadows (such as animal shapes) as input for the main characters. The system then develops the story plot as new shadow characters are introduced. The story appears through a projector as a backdrop for shadow puppetry while being narrated through a speaker as users turn a crank to “play” in real time. By combining visual, auditory, and bodily interactions in one system, the project aims to spark creativity in shadow play storytelling and enable multi-modal human-AI collaboration. Perfect Syntax “Perfect Syntax,” by Karyn Nakamura ’24, is a video art piece examining the syntactic logic behind motion and video. Using AI to manipulate video fragments, the project explores how the fluidity of motion and time can be simulated and reconstructed by machines. Drawing inspiration from both philosophical inquiry and artistic practice, Nakamura’s work interrogates the relationship between perception, technology, and the movement that shapes our experience of the world. By reimagining video through computational processes, Nakamura investigates the complexities of how machines understand and represent the passage of time and motion.

Explained: Generative AI’s environmental impact

In a two-part series, MIT News explores the environmental implications of generative AI. In this article, we look at why this technology is so resource-intensive. A second piece will investigate what experts are doing to reduce genAI’s carbon footprint and other impacts. The excitement surrounding potential benefits of generative AI, from improving worker productivity to advancing scientific research, is hard to ignore. While the explosive growth of this new technology has enabled rapid deployment of powerful models in many industries, the environmental consequences of this generative AI “gold rush” remain difficult to pin down, let alone mitigate. The computational power required to train generative AI models that often have billions of parameters, such as OpenAI’s GPT-4, can demand a staggering amount of electricity, which leads to increased carbon dioxide emissions and pressures on the electric grid. Furthermore, deploying these models in real-world applications, enabling millions to use generative AI in their daily lives, and then fine-tuning the models to improve their performance draws large amounts of energy long after a model has been developed. Beyond electricity demands, a great deal of water is needed to cool the hardware used for training, deploying, and fine-tuning generative AI models, which can strain municipal water supplies and disrupt local ecosystems. The increasing number of generative AI applications has also spurred demand for high-performance computing hardware, adding indirect environmental impacts from its manufacture and transport. “When we think about the environmental impact of generative AI, it is not just the electricity you consume when you plug the computer in. There are much broader consequences that go out to a system level and persist based on actions that we take,” says Elsa A. Olivetti, professor in the Department of Materials Science and Engineering and the lead of the Decarbonization Mission of MIT’s new Climate Project. Olivetti is senior author of a 2024 paper, “The Climate and Sustainability Implications of Generative AI,” co-authored by MIT colleagues in response to an Institute-wide call for papers that explore the transformative potential of generative AI, in both positive and negative directions for society. Demanding data centers The electricity demands of data centers are one major factor contributing to the environmental impacts of generative AI, since data centers are used to train and run the deep learning models behind popular tools like ChatGPT and DALL-E. A data center is a temperature-controlled building that houses computing infrastructure, such as servers, data storage drives, and network equipment. For instance, Amazon has more than 100 data centers worldwide, each of which has about 50,000 servers that the company uses to support cloud computing services. While data centers have been around since the 1940s (the first was built at the University of Pennsylvania in 1945 to support the first general-purpose digital computer, the ENIAC), the rise of generative AI has dramatically increased the pace of data center construction. “What is different about generative AI is the power density it requires. Fundamentally, it is just computing, but a generative AI training cluster might consume seven or eight times more energy than a typical computing workload,” says Noman Bashir, lead author of the impact paper, who is a Computing and Climate Impact Fellow at MIT Climate and Sustainability Consortium (MCSC) and a postdoc in the Computer Science and Artificial Intelligence Laboratory (CSAIL). Scientists have estimated that the power requirements of data centers in North America increased from 2,688 megawatts at the end of 2022 to 5,341 megawatts at the end of 2023, partly driven by the demands of generative AI. Globally, the electricity consumption of data centers rose to 460 terawatts in 2022. This would have made data centers the 11th largest electricity consumer in the world, between the nations of Saudi Arabia (371 terawatts) and France (463 terawatts), according to the Organization for Economic Co-operation and Development. By 2026, the electricity consumption of data centers is expected to approach 1,050 terawatts (which would bump data centers up to fifth place on the global list, between Japan and Russia). While not all data center computation involves generative AI, the technology has been a major driver of increasing energy demands. “The demand for new data centers cannot be met in a sustainable way. The pace at which companies are building new data centers means the bulk of the electricity to power them must come from fossil fuel-based power plants,” says Bashir. The power needed to train and deploy a model like OpenAI’s GPT-3 is difficult to ascertain. In a 2021 research paper, scientists from Google and the University of California at Berkeley estimated the training process alone consumed 1,287 megawatt hours of electricity (enough to power about 120 average U.S. homes for a year), generating about 552 tons of carbon dioxide. While all machine-learning models must be trained, one issue unique to generative AI is the rapid fluctuations in energy use that occur over different phases of the training process, Bashir explains. Power grid operators must have a way to absorb those fluctuations to protect the grid, and they usually employ diesel-based generators for that task. Increasing impacts from inference Once a generative AI model is trained, the energy demands don’t disappear. Each time a model is used, perhaps by an individual asking ChatGPT to summarize an email, the computing hardware that performs those operations consumes energy. Researchers have estimated that a ChatGPT query consumes about five times more electricity than a simple web search. “But an everyday user doesn’t think too much about that,” says Bashir. “The ease-of-use of generative AI interfaces and the lack of information about the environmental impacts of my actions means that, as a user, I don’t have much incentive to cut back on my use of generative AI.” With traditional AI, the energy usage is split fairly evenly between data processing, model training, and inference, which is the process of using a trained model to make predictions on new data. However, Bashir expects the electricity demands of generative AI inference to eventually dominate since these models are becoming ubiquitous in so many applications, and the electricity

Algorithms and AI for a better world

Amid the benefits that algorithmic decision-making and artificial intelligence offer — including revolutionizing speed, efficiency, and predictive ability in a vast range of fields — Manish Raghavan is working to mitigate associated risks, while also seeking opportunities to apply the technologies to help with preexisting social concerns. “I ultimately want my research to push towards better solutions to long-standing societal problems,” says Raghavan, the Drew Houston Career Development Professor in MIT’s Sloan School of Management and the Department of Electrical Engineering and Computer Science and a principal investigator at the Laboratory for Information and Decision Systems (LIDS). A good example of Raghavan’s intention can be found in his exploration of the use AI in hiring. Raghavan says, “It’s hard to argue that hiring practices historically have been particularly good or worth preserving, and tools that learn from historical data inherit all of the biases and mistakes that humans have made in the past.” Here, however, Raghavan cites a potential opportunity. “It’s always been hard to measure discrimination,” he says, adding, “AI-driven systems are sometimes easier to observe and measure than humans, and one goal of my work is to understand how we might leverage this improved visibility to come up with new ways to figure out when systems are behaving badly.” Growing up in the San Francisco Bay Area with parents who both have computer science degrees, Raghavan says he originally wanted to be a doctor. Just before starting college, though, his love of math and computing called him to follow his family example into computer science. After spending a summer as an undergraduate doing research at Cornell University with Jon Kleinberg, professor of computer science and information science, he decided he wanted to earn his PhD there, writing his thesis on “The Societal Impacts of Algorithmic Decision-Making.” Raghavan won awards for his work, including a National Science Foundation Graduate Research Fellowships Program award, a Microsoft Research PhD Fellowship, and the Cornell University Department of Computer Science PhD Dissertation Award. In 2022, he joined the MIT faculty. Perhaps hearkening back to his early interest in medicine, Raghavan has done research on whether the determinations of a highly accurate algorithmic screening tool used in triage of patients with gastrointestinal bleeding, known as the Glasgow-Blatchford Score (GBS), are improved with complementary expert physician advice. “The GBS is roughly as good as humans on average, but that doesn’t mean that there aren’t individual patients, or small groups of patients, where the GBS is wrong and doctors are likely to be right,” he says. “Our hope is that we can identify these patients ahead of time so that doctors’ feedback is particularly valuable there.” Raghavan has also worked on how online platforms affect their users, considering how social media algorithms observe the content a user chooses and then show them more of that same kind of content. The difficulty, Raghavan says, is that users may be choosing what they view in the same way they might grab bag of potato chips, which are of course delicious but not all that nutritious. The experience may be satisfying in the moment, but it can leave the user feeling slightly sick. Raghavan and his colleagues have developed a model of how a user with conflicting desires — for immediate gratification versus a wish of longer-term satisfaction — interacts with a platform. The model demonstrates how a platform’s design can be changed to encourage a more wholesome experience. The model won the Exemplary Applied Modeling Track Paper Award at the 2022 Association for Computing Machinery Conference on Economics and Computation. “Long-term satisfaction is ultimately important, even if all you care about is a company’s interests,” Raghavan says. “If we can start to build evidence that user and corporate interests are more aligned, my hope is that we can push for healthier platforms without needing to resolve conflicts of interest between users and platforms. Of course, this is idealistic. But my sense is that enough people at these companies believe there’s room to make everyone happier, and they just lack the conceptual and technical tools to make it happen.” Regarding his process of coming up with ideas for such tools and concepts for how to best apply computational techniques, Raghavan says his best ideas come to him when he’s been thinking about a problem off and on for a time. He would advise his students, he says, to follow his example of putting a very difficult problem away for a day and then coming back to it. “Things are often better the next day,” he says. When he’s not puzzling out a problem or teaching, Raghavan can often be found outdoors on a soccer field, as a coach of the Harvard Men’s Soccer Club, a position he cherishes. “I can’t procrastinate if I know I’ll have to spend the evening at the field, and it gives me something to look forward to at the end of the day,” he says. “I try to have things in my schedule that seem at least as important to me as work to put those challenges and setbacks into context.” As Raghavan considers how to apply computational technologies to best serve our world, he says he finds the most exciting thing going on his field is the idea that AI will open up new insights into “humans and human society.” “I’m hoping,” he says, “that we can use it to better understand ourselves.”

Making the art world more accessible

In the world of high-priced art, galleries usually act as gatekeepers. Their selective curation process is a key reason galleries in major cities often feature work from the same batch of artists. The system limits opportunities for emerging artists and leaves great art undiscovered. NALA was founded by Benjamin Gulak ’22 to disrupt the gallery model. The company’s digital platform, which was started as part of an MIT class project, allows artists to list their art and uses machine learning and data science to offer personalized recommendations to art lovers. By providing a much larger pool of artwork to buyers, the company is dismantling the exclusive barriers put up by traditional galleries and efficiently connecting creators with collectors. “There’s so much talent out there that has never had the opportunity to be seen outside of the artists’ local market,” Gulak says. “We’re opening the art world to all artists, creating a true meritocracy.” NALA takes no commission from artists, instead charging buyers an 11.5 percent commission on top of the artist’s listed price. Today more than 20,000 art lovers are using NALA’s platform, and the company has registered more than 8,500 artists. “My goal is for NALA to become the dominant place where art is discovered, bought, and sold online,” Gulak says. “The gallery model has existed for such a long period of time that they are the tastemakers in the art world. However, most buyers never realize how restrictive the industry has been.” From founder to student to founder again Growing up in Canada, Gulak worked hard to get into MIT, participating in science fairs and robotic competitions throughout high school. When he was 16, he created an electric, one-wheeled motorcycle that got him on the popular television show “Shark Tank” and was later named one of the top inventions of the year by Popular Science. Gulak was accepted into MIT in 2009 but withdrew from his undergrad program shortly after entering to launch a business around the media exposure and capital from “Shark Tank.” Following a whirlwind decade in which he raised more than $12 million and sold thousands of units globally, Gulak decided to return to MIT to complete his degree, switching his major from mechanical engineering to one combining computer science, economics, and data science. “I spent 10 years of my life building my business, and realized to get the company where I wanted it to be, it would take another decade, and that wasn’t what I wanted to be doing,” Gulak says. “I missed learning, and I missed the academic side of my life. I basically begged MIT to take me back, and it was the best decision I ever made.” During the ups and downs of running his company, Gulak took up painting to de-stress. Art had always been a part of Gulak’s life, and he had even done a fine arts study abroad program in Italy during high school. Determined to try selling his art, he collaborated with some prominent art galleries in London, Miami, and St. Moritz. Eventually he began connecting artists he’d met on travels from emerging markets like Cuba, Egypt, and Brazil to the gallery owners he knew. “The results were incredible because these artists were used to selling their work to tourists for $50, and suddenly they’re hanging work in a fancy gallery in London and getting 5,000 pounds,” Gulak says. “It was the same artist, same talent, but different buyers.” At the time, Gulak was in his third year at MIT and wondering what he’d do after graduation. He thought he wanted to start a new business, but every industry he looked at was dominated by tech giants. Every industry, that is, except the art world. “The art industry is archaic,” Gulak says. “Galleries have monopolies over small groups of artists, and they have absolute control over the prices. The buyers are told what the value is, and almost everywhere you look in the industry, there’s inefficiencies.” At MIT, Gulak was studying the recommender engines that are used to populate social media feeds and personalize show and music suggestions, and he envisioned something similar for the visual arts. “I thought, why, when I go on the big art platforms, do I see horrible combinations of artwork even though I’ve had accounts on these platforms for years?” Gulak says. “I’d get new emails every week titled ‘New art for your collection,’ and the platform had no idea about my taste or budget.” For a class project at MIT, Gulak built a system that tried to predict the types of art that would do well in a gallery. By his final year at MIT, he had realized that working directly with artists would be a more promising approach. “Online platforms typically take a 30 percent fee, and galleries can take an additional 50 percent fee, so the artist ends up with a small percentage of each online sale, but the buyer also has to pay a luxury import duty on the full price,” Gulak explains. “That means there’s a massive amount of fat in the middle, and that’s where our direct-to-artist business model comes in.” Today NALA, which stands for Networked Artistic Learning Algorithm, onboards artists by having them upload artwork and fill out a questionnaire about their style. They can begin uploading work immediately and choose their listing price. The company began by using AI to match art with its most likely buyer. Gulak notes that not all art will sell — “if you’re making rock paintings there may not be a big market” — and artists may price their work higher than buyers are willing to pay, but the algorithm works to put art in front of the most likely buyer based on style preferences and budget. NALA also handles sales and shipments, providing artists with 100 percent of their list price from every sale. “By not taking commissions, we’re very pro artists,” Gulak says. “We also allow all artists to participate, which is unique in this

Top Home Improvement Trends for 2025: What’s In and What’s Out

As we head into 2025, the world of home improvement is evolving. With shifting design trends, new technologies, and growing environmental awareness, homeowners are investing in spaces that combine functionality, comfort, and sustainability. If you’re planning any updates or renovations this year, here are the biggest trends to consider—and what’s starting to fade. 1. What’s Fading: The All-White Everything Trend For a long time, white walls, white kitchens, and minimalist designs ruled the home improvement scene. But in 2025, this trend is losing its appeal as homeowners seek warmth, texture, and more vibrant, expressive design. Excessive Minimalism: Rooms that feel sterile and overly simplistic are being replaced by spaces that encourage comfort, individuality, and personality. Impersonal Decor: Mass-produced, generic furniture is being swapped out for pieces that reflect personal style, whether vintage, eclectic, or custom-designed. 2. Smart Homes Aren’t Just for Tech Enthusiasts Technology has continued to transform how we live in our homes. In 2025, smart home technology is becoming more accessible and functional, extending beyond simple security systems. Voice-Controlled Devices: Smart speakers and virtual assistants like Alexa and Google Assistant are now controlling everything from lighting to thermostats and even kitchen appliances. Home Automation: Homeowners are embracing automation with systems that learn their habits and adjust temperature, lighting, and security settings without manual input. Smart Kitchens: AI-powered appliances that can suggest recipes, order groceries, and even cook food are becoming standard in many homes. 3. Maximizing Small Spaces with Multi-Functional Furniture As real estate prices rise and space becomes more limited, homeowners are looking for ways to maximize the space they have. Multi-functional furniture is a solution that’s here to stay. Foldable and Expandable Furniture: Pieces like foldable dining tables, expandable couches, and beds that transform into desks help make the most of small living spaces. Hidden Storage Solutions: Under-bed storage, built-in shelves, and even furniture that doubles as storage are gaining popularity for their ability to keep homes organized and clutter-free. 4. Bold Colors and Customization in Interior Design While neutral tones dominated for years, 2025 is seeing a return of bold colors, personalized touches, and unique design choices in home interiors. Vibrant Hues: Expect to see shades like deep blues, rich greens, and bold oranges making their way into living rooms, kitchens, and bedrooms. Custom Decor: Homeowners are opting for custom furniture, hand-made art, and personalized details to make their homes truly one-of-a-kind. 5. Outdoor Living Spaces as Extensions of the Home As the lines between indoor and outdoor living continue to blur, outdoor spaces are becoming more like fully functional rooms of the house. Outdoor Kitchens: Full outdoor kitchens with grills, sinks, and refrigerators are becoming more common for those who love entertaining or dining al fresco. Fire Pits and Lounges: Comfortable seating areas and fire pits are essential for cozy evenings in the backyard. Zen Gardens and Relaxation Areas: More homeowners are designing outdoor spaces dedicated to relaxation, incorporating elements like water features, greenery, and quiet spots for reflection. 6. Sustainable Living Is More Than a Trend Eco-friendly renovations are no longer a “nice to have” but a “must have.” Homeowners are increasingly seeking to reduce their carbon footprint with sustainable upgrades that save energy and water while boosting home value. Energy-Efficient Appliances: From smart refrigerators to dishwashers that use less water and energy, the demand for energy-efficient appliances continues to rise. Solar Panels: Solar energy is becoming a standard choice for homeowners looking to cut energy costs and reduce reliance on fossil fuels. Water Conservation: Low-flow toilets, rainwater harvesting systems, and drought-resistant landscaping are all becoming more popular. Wrapping Up Whether you’re looking to reduce your environmental impact, embrace cutting-edge tech, or create a space that reflects your personal style, 2025 is the year of transformation for home improvement. By focusing on sustainability, smart technology, and customizable design, you can create a space that’s not only functional but also a true reflection of who you are.

Q&A: The climate impact of generative AI

Vijay Gadepally, a senior staff member at MIT Lincoln Laboratory, leads a number of projects at the Lincoln Laboratory Supercomputing Center (LLSC) to make computing platforms, and the artificial intelligence systems that run on them, more efficient. Here, Gadepally discusses the increasing use of generative AI in everyday tools, its hidden environmental impact, and some of the ways that Lincoln Laboratory and the greater AI community can reduce emissions for a greener future. Q: What trends are you seeing in terms of how generative AI is being used in computing? A: Generative AI uses machine learning (ML) to create new content, like images and text, based on data that is inputted into the ML system. At the LLSC we design and build some of the largest academic computing platforms in the world, and over the past few years we’ve seen an explosion in the number of projects that need access to high-performance computing for generative AI. We’re also seeing how generative AI is changing all sorts of fields and domains — for example, ChatGPT is already influencing the classroom and the workplace faster than regulations can seem to keep up. We can imagine all sorts of uses for generative AI within the next decade or so, like powering highly capable virtual assistants, developing new drugs and materials, and even improving our understanding of basic science. We can’t predict everything that generative AI will be used for, but I can certainly say that with more and more complex algorithms, their compute, energy, and climate impact will continue to grow very quickly. Q: What strategies is the LLSC using to mitigate this climate impact? A: We’re always looking for ways to make computing more efficient, as doing so helps our data center make the most of its resources and allows our scientific colleagues to push their fields forward in as efficient a manner as possible. As one example, we’ve been reducing the amount of power our hardware consumes by making simple changes, similar to dimming or turning off lights when you leave a room. In one experiment, we reduced the energy consumption of a group of graphics processing units by 20 percent to 30 percent, with minimal impact on their performance, by enforcing a power cap. This technique also lowered the hardware operating temperatures, making the GPUs easier to cool and longer lasting. Another strategy is changing our behavior to be more climate-aware. At home, some of us might choose to use renewable energy sources or intelligent scheduling. We are using similar techniques at the LLSC — such as training AI models when temperatures are cooler, or when local grid energy demand is low. We also realized that a lot of the energy spent on computing is often wasted, like how a water leak increases your bill but without any benefits to your home. We developed some new techniques that allow us to monitor computing workloads as they are running and then terminate those that are unlikely to yield good results. Surprisingly, in a number of cases we found that the majority of computations could be terminated early without compromising the end result. Q: What’s an example of a project you’ve done that reduces the energy output of a generative AI program? A: We recently built a climate-aware computer vision tool. Computer vision is a domain that’s focused on applying AI to images; so, differentiating between cats and dogs in an image, correctly labeling objects within an image, or looking for components of interest within an image. In our tool, we included real-time carbon telemetry, which produces information about how much carbon is being emitted by our local grid as a model is running. Depending on this information, our system will automatically switch to a more energy-efficient version of the model, which typically has fewer parameters, in times of high carbon intensity, or a much higher-fidelity version of the model in times of low carbon intensity. By doing this, we saw a nearly 80 percent reduction in carbon emissions over a one- to two-day period. We recently extended this idea to other generative AI tasks such as text summarization and found the same results. Interestingly, the performance sometimes improved after using our technique! Q: What can we do as consumers of generative AI to help mitigate its climate impact? A: As consumers, we can ask our AI providers to offer greater transparency. For example, on Google Flights, I can see a variety of options that indicate a specific flight’s carbon footprint. We should be getting similar kinds of measurements from generative AI tools so that we can make a conscious decision on which product or platform to use based on our priorities. We can also make an effort to be more educated on generative AI emissions in general. Many of us are familiar with vehicle emissions, and it can help to talk about generative AI emissions in comparative terms. People may be surprised to know, for example, that one image-generation task is roughly equivalent to driving four miles in a gas car, or that it takes the same amount of energy to charge an electric car as it does to generate about 1,500 text summarizations. There are many cases where customers would be happy to make a trade-off if they knew the trade-off’s impact. Q: What do you see for the future? A: Mitigating the climate impact of generative AI is one of those problems that people all over the world are working on, and with a similar goal. We’re doing a lot of work here at Lincoln Laboratory, but its only scratching at the surface. In the long term, data centers, AI developers, and energy grids will need to work together to provide “energy audits” to uncover other unique ways that we can improve computing efficiencies. We need more partnerships and more collaboration in order to forge ahead. If you’re interested in learning more, or collaborating with Lincoln Laboratory on these efforts, please contact Vijay Gadepally.

Teaching AI to communicate sounds like humans do

Whether you’re describing the sound of your faulty car engine or meowing like your neighbor’s cat, imitating sounds with your voice can be a helpful way to relay a concept when words don’t do the trick. Vocal imitation is the sonic equivalent of doodling a quick picture to communicate something you saw — except that instead of using a pencil to illustrate an image, you use your vocal tract to express a sound. This might seem difficult, but it’s something we all do intuitively: To experience it for yourself, try using your voice to mirror the sound of an ambulance siren, a crow, or a bell being struck. Inspired by the cognitive science of how we communicate, MIT Computer Science and Artificial Intelligence Laboratory (CSAIL) researchers have developed an AI system that can produce human-like vocal imitations with no training, and without ever having “heard” a human vocal impression before. To achieve this, the researchers engineered their system to produce and interpret sounds much like we do. They started by building a model of the human vocal tract that simulates how vibrations from the voice box are shaped by the throat, tongue, and lips. Then, they used a cognitively-inspired AI algorithm to control this vocal tract model and make it produce imitations, taking into consideration the context-specific ways that humans choose to communicate sound. The model can effectively take many sounds from the world and generate a human-like imitation of them — including noises like leaves rustling, a snake’s hiss, and an approaching ambulance siren. Their model can also be run in reverse to guess real-world sounds from human vocal imitations, similar to how some computer vision systems can retrieve high-quality images based on sketches. For instance, the model can correctly distinguish the sound of a human imitating a cat’s “meow” versus its “hiss.” In the future, this model could potentially lead to more intuitive “imitation-based” interfaces for sound designers, more human-like AI characters in virtual reality, and even methods to help students learn new languages. The co-lead authors — MIT CSAIL PhD students Kartik Chandra SM ’23 and Karima Ma, and undergraduate researcher Matthew Caren — note that computer graphics researchers have long recognized that realism is rarely the ultimate goal of visual expression. For example, an abstract painting or a child’s crayon doodle can be just as expressive as a photograph. “Over the past few decades, advances in sketching algorithms have led to new tools for artists, advances in AI and computer vision, and even a deeper understanding of human cognition,” notes Chandra. “In the same way that a sketch is an abstract, non-photorealistic representation of an image, our method captures the abstract, non-phono–realistic ways humans express the sounds they hear. This teaches us about the process of auditory abstraction.” The art of imitation, in three parts The team developed three increasingly nuanced versions of the model to compare to human vocal imitations. First, they created a baseline model that simply aimed to generate imitations that were as similar to real-world sounds as possible — but this model didn’t match human behavior very well. The researchers then designed a second “communicative” model. According to Caren, this model considers what’s distinctive about a sound to a listener. For instance, you’d likely imitate the sound of a motorboat by mimicking the rumble of its engine, since that’s its most distinctive auditory feature, even if it’s not the loudest aspect of the sound (compared to, say, the water splashing). This second model created imitations that were better than the baseline, but the team wanted to improve it even more. To take their method a step further, the researchers added a final layer of reasoning to the model. “Vocal imitations can sound different based on the amount of effort you put into them. It costs time and energy to produce sounds that are perfectly accurate,” says Chandra. The researchers’ full model accounts for this by trying to avoid utterances that are very rapid, loud, or high- or low-pitched, which people are less likely to use in a conversation. The result: more human-like imitations that closely match many of the decisions that humans make when imitating the same sounds. After building this model, the team conducted a behavioral experiment to see whether the AI- or human-generated vocal imitations were perceived as better by human judges. Notably, participants in the experiment favored the AI model 25 percent of the time in general, and as much as 75 percent for an imitation of a motorboat and 50 percent for an imitation of a gunshot. Toward more expressive sound technology Passionate about technology for music and art, Caren envisions that this model could help artists better communicate sounds to computational systems and assist filmmakers and other content creators with generating AI sounds that are more nuanced to a specific context. It could also enable a musician to rapidly search a sound database by imitating a noise that is difficult to describe in, say, a text prompt. In the meantime, Caren, Chandra, and Ma are looking at the implications of their model in other domains, including the development of language, how infants learn to talk, and even imitation behaviors in birds like parrots and songbirds. The team still has work to do with the current iteration of their model: It struggles with some consonants, like “z,” which led to inaccurate impressions of some sounds, like bees buzzing. They also can’t yet replicate how humans imitate speech, music, or sounds that are imitated differently across different languages, like a heartbeat. Stanford University linguistics professor Robert Hawkins says that language is full of onomatopoeia and words that mimic but don’t fully replicate the things they describe, like the “meow” sound that very inexactly approximates the sound that cats make. “The processes that get us from the sound of a real cat to a word like ‘meow’ reveal a lot about the intricate interplay between physiology, social reasoning, and communication in the evolution of language,” says Hawkins, who wasn’t

New AI tool generates realistic satellite images of future flooding

Visualizing the potential impacts of a hurricane on people’s homes before it hits can help residents prepare and decide whether to evacuate. MIT scientists have developed a method that generates satellite imagery from the future to depict how a region would look after a potential flooding event. The method combines a generative artificial intelligence model with a physics-based flood model to create realistic, birds-eye-view images of a region, showing where flooding is likely to occur given the strength of an oncoming storm. As a test case, the team applied the method to Houston and generated satellite images depicting what certain locations around the city would look like after a storm comparable to Hurricane Harvey, which hit the region in 2017. The team compared these generated images with actual satellite images taken of the same regions after Harvey hit. They also compared AI-generated images that did not include a physics-based flood model. The team’s physics-reinforced method generated satellite images of future flooding that were more realistic and accurate. The AI-only method, in contrast, generated images of flooding in places where flooding is not physically possible. The team’s method is a proof-of-concept, meant to demonstrate a case in which generative AI models can generate realistic, trustworthy content when paired with a physics-based model. In order to apply the method to other regions to depict flooding from future storms, it will need to be trained on many more satellite images to learn how flooding would look in other regions. “The idea is: One day, we could use this before a hurricane, where it provides an additional visualization layer for the public,” says Björn Lütjens, a postdoc in MIT’s Department of Earth, Atmospheric and Planetary Sciences, who led the research while he was a doctoral student in MIT’s Department of Aeronautics and Astronautics (AeroAstro). “One of the biggest challenges is encouraging people to evacuate when they are at risk. Maybe this could be another visualization to help increase that readiness.” To illustrate the potential of the new method, which they have dubbed the “Earth Intelligence Engine,” the team has made it available as an online resource for others to try. The researchers report their results today in the journal IEEE Transactions on Geoscience and Remote Sensing. The study’s MIT co-authors include Brandon Leshchinskiy; Aruna Sankaranarayanan; and Dava Newman, professor of AeroAstro and director of the MIT Media Lab; along with collaborators from multiple institutions. Generative adversarial images The new study is an extension of the team’s efforts to apply generative AI tools to visualize future climate scenarios. “Providing a hyper-local perspective of climate seems to be the most effective way to communicate our scientific results,” says Newman, the study’s senior author. “People relate to their own zip code, their local environment where their family and friends live. Providing local climate simulations becomes intuitive, personal, and relatable.” For this study, the authors use a conditional generative adversarial network, or GAN, a type of machine learning method that can generate realistic images using two competing, or “adversarial,” neural networks. The first “generator” network is trained on pairs of real data, such as satellite images before and after a hurricane. The second “discriminator” network is then trained to distinguish between the real satellite imagery and the one synthesized by the first network. Each network automatically improves its performance based on feedback from the other network. The idea, then, is that such an adversarial push and pull should ultimately produce synthetic images that are indistinguishable from the real thing. Nevertheless, GANs can still produce “hallucinations,” or factually incorrect features in an otherwise realistic image that shouldn’t be there. “Hallucinations can mislead viewers,” says Lütjens, who began to wonder whether such hallucinations could be avoided, such that generative AI tools can be trusted to help inform people, particularly in risk-sensitive scenarios. “We were thinking: How can we use these generative AI models in a climate-impact setting, where having trusted data sources is so important?” Flood hallucinations In their new work, the researchers considered a risk-sensitive scenario in which generative AI is tasked with creating satellite images of future flooding that could be trustworthy enough to inform decisions of how to prepare and potentially evacuate people out of harm’s way. Typically, policymakers can get an idea of where flooding might occur based on visualizations in the form of color-coded maps. These maps are the final product of a pipeline of physical models that usually begins with a hurricane track model, which then feeds into a wind model that simulates the pattern and strength of winds over a local region. This is combined with a flood or storm surge model that forecasts how wind might push any nearby body of water onto land. A hydraulic model then maps out where flooding will occur based on the local flood infrastructure and generates a visual, color-coded map of flood elevations over a particular region. “The question is: Can visualizations of satellite imagery add another level to this, that is a bit more tangible and emotionally engaging than a color-coded map of reds, yellows, and blues, while still being trustworthy?” Lütjens says. The team first tested how generative AI alone would produce satellite images of future flooding. They trained a GAN on actual satellite images taken by satellites as they passed over Houston before and after Hurricane Harvey. When they tasked the generator to produce new flood images of the same regions, they found that the images resembled typical satellite imagery, but a closer look revealed hallucinations in some images, in the form of floods where flooding should not be possible (for instance, in locations at higher elevation). To reduce hallucinations and increase the trustworthiness of the AI-generated images, the team paired the GAN with a physics-based flood model that incorporates real, physical parameters and phenomena, such as an approaching hurricane’s trajectory, storm surge, and flood patterns. With this physics-reinforced method, the team generated satellite images around Houston that depict the same flood extent, pixel by pixel, as forecasted by the flood model. “We show a tangible way to combine

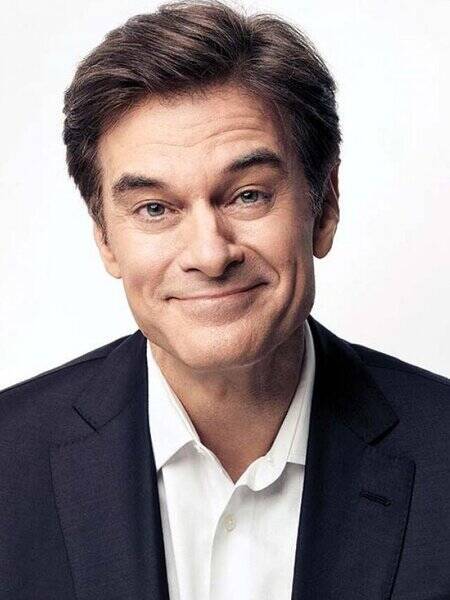

Donald Trump Taps Dr. Oz to Head U.S. Medicaid & Medicare

President-elect Donald Trump has nominated Dr. Mehmet Oz, a well-known television personality and surgeon, to lead the Centers for Medicare and Medicaid Services (CMS). Oz, who will oversee programs impacting millions of Americans, is known for his media presence and health advocacy. However, some have criticized his promotion of treatments lacking scientific support. Trump’s selection of Oz highlights his unconventional approach to leadership, blending celebrity influence with healthcare reform goals. Related Podcast Trump Taps Dr. Oz for Medicaid & Medicare President-elect Donald Trump has nominated Dr. Mehmet Oz, a well-known television personality and surgeon, to lead the Centers for Medicare and Medicaid Services (CMS).