Gemini’s Ascent: Can Google’s Smart AI Crack the Top Tier?

Alright, let’s talk AI. Google’s got their contender, Gemini, and it’s reportedly racking up users faster than you can say “neural network.” But in a crowded field of digital brains, is Gemini just the enthusiastic newcomer, or does it have the smarts to really challenge the reigning AI heavyweights? We’ll dive into Gemini’s growth spurt and see if it’s on a trajectory to truly compete at the top. So, what’s fueling Gemini’s rising popularity? Is it the seamless integration with Google’s sprawling ecosystem – kind of like that comfy pair of sneakers that just works with everything? Or maybe its unique bag of AI tricks is finally resonating with users. We’ll analyze where this growth is happening and how it stacks up against the adoption rates of its well-established rivals. Spoiler alert: the AI leaderboard is a tough nut to crack. The AI Titans Gemini is Eyeing Think of the current AI landscape as a tech Olympics. A few players have already snagged the gold medals, boasting massive user bases, deeply ingrained integrations, and the kind of brand recognition that makes them practically household names in the digital realm. We’ll examine what gives these frontrunners their edge and the considerable ground Gemini needs to cover. Gemini’s Strengths: The “Aha!” Moments (and Potential “Hmm…” Areas) Every AI has its signature moves. Gemini’s got some impressive capabilities, like its knack for juggling text, images, and even video – a true multimodal maestro. Plus, its cozy relationship with the Googleverse is a definite plus. But are there areas where it’s still in AI training wheels compared to the seasoned pros? We’ll offer a balanced perspective on Gemini’s current toolkit. Google’s Strategy Playbook Google isn’t known for sitting on the sidelines. Their strategy to boost Gemini’s profile likely involves deeper integration across their suite of products (imagine Gemini subtly enhancing your Google Docs… or maybe even your search for that perfect pizza recipe). They’re also probably forging partnerships and definitely pouring resources into making Gemini even smarter. We’ll dissect their game plan. The Developer Factor: Building the Future of AI For an AI to truly flourish, it needs a vibrant community of developers creating innovative applications. How does Gemini’s developer support stack up against the competition? A thriving ecosystem can be the secret sauce for long-term success. Think of it as providing the best LEGO bricks for the most creative builders. The Crystal Ball: Gemini’s Chances of Reaching the Summit So, the million-dollar question: can Gemini actually elbow its way to the top of the AI hierarchy? It’s a marathon, not a sprint. We’ll speculate on the key milestones and breakthroughs that could propel Google’s AI to the forefront. The AI landscape is constantly shifting, so buckle up. What the Smart Folks Are Saying To get a well-rounded view, we’ll likely tap into the insights of AI analysts and industry experts. Their perspectives can offer a valuable reality check and shed light on Gemini’s true potential. The Takeaway Gemini’s growing user base is a clear signal that Google is serious about the AI race. But to truly contend with the leaders, they’ll need to leverage Gemini’s unique strengths, strategically address any shortcomings, and continue to innovate at a rapid pace. The quest for AI supremacy is on, and Gemini is definitely a contender to watch.

China’s ‘Embodied AI’ Revolution: Humanoid Robots and Drones Reshape Daily Life

China is accelerating its use of “embodied AI”—real-world robots and drones powered by artificial intelligence—to transform daily life. In major cities like Shenzhen, food deliveries by autonomous drones have become increasingly common, while humanoid robots are showing up at tech expos, shopping centers, and even assisting with customer service. The AI momentum kicked into high gear thanks to homegrown models like DeepSeek’s R1, which rival global leaders despite running on less sophisticated chips. This success is fueling a push for open-source development, drawing global attention to China’s growing AI ecosystem. Shenzhen is at the heart of this wave. The city is fast becoming China’s AI capital, with strong government support, relaxed regulations, and booming innovation from both startups and tech giants. Robots—both humanlike and wheeled—are being tested in real-world settings, from logistics centers to care facilities. While some of the flashier robots are still working through mobility and coordination issues, the country is pressing ahead with its plans. Government funding is flowing into tech development as a strategic pivot away from weakening sectors like real estate and exports. There’s also been a shift in tone from China’s leadership. Recent signals from the top suggest a renewed embrace of tech entrepreneurship, after years of tightened control. That green light is giving innovators the confidence—and the runway—to dream even bigger.

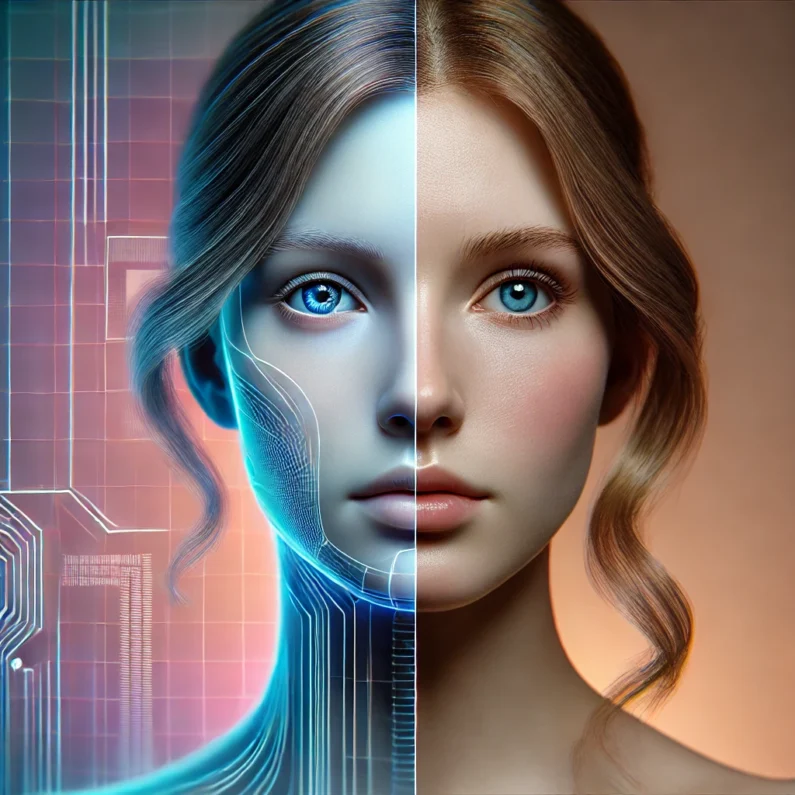

Is the Rise of AI Deepfakes Eroding Trust in Reality?

In an era where technology evolves at breakneck speed, AI-generated deepfakes have emerged as a double-edged sword. While they showcase the incredible capabilities of artificial intelligence, they also blur the lines between reality and fabrication. From convincingly altered videos of public figures to synthetic voices mimicking real individuals, the proliferation of deepfakes poses a pressing question: Can we still trust what we see and hear? As these digital forgeries become more sophisticated, concerns grow about their potential misuse in politics, media, and personal relationships. The implications are vast—undermining public trust, spreading misinformation, and challenging the very notion of objective truth. So, what do you think?

How AI Is Quietly Powering Your Everyday Life in 2025

Artificial intelligence (AI) used to sound like something out of a sci-fi movie. Fast-forward to 2025, and it’s quietly running in the background of your daily life—from the moment you wake up to the second your head hits the pillow. It’s not just in high-tech labs anymore—it’s in your apps, your home, your car, and even your grocery list. Here’s a peek at how AI is seamlessly woven into your everyday routine. 1. Your Morning Routine Is Smarter Than You Think The moment your alarm goes off, AI is already at work. Smart home systems like Alexa or Google Assistant adjust your lights, set your coffee to brew, and even read you the morning news—tailored to your interests. Some apps now use AI to analyze your sleep patterns and suggest better bedtime routines, optimizing your rest based on real-time feedback. 2. AI Helps You Get Dressed and Out the Door Apps like StyleDNA or Amazon’s “Personal Shopper” use AI to suggest outfits based on the weather, your calendar, and even fashion trends. Meanwhile, AI-powered traffic prediction apps like Waze help plan your best route to work, rerouting around accidents or delays in real time. 3. Your Workplace Runs on AI (Even If You Don’t Notice) Whether you’re answering emails, editing documents, or working in customer service, AI is boosting your productivity. Tools like Grammarly or ChatGPT improve communication. AI scheduling assistants handle meetings. In customer support, chatbots solve problems before a human agent even gets involved. And for business owners, AI is analyzing sales trends, recommending pricing adjustments, and even helping write marketing content. 4. AI Knows What You Want for Lunch Feeling like sushi? AI-driven food delivery apps predict what you’re craving based on your history, the time of day, and local restaurant trends. Grocery apps do the same—suggesting items to add to your cart and generating recipes based on what’s in your fridge. 5. Your Health and Fitness Plans Just Got Smarter Wearables like the Apple Watch or Fitbit use AI to monitor your heart rate, sleep cycles, and activity levels—then recommend ways to improve your health. Virtual fitness coaches provide personalized workouts. Even telehealth platforms now use AI to screen symptoms and guide patients before they talk to a doctor. 6. AI Is Behind the Scenes of Your Streaming Binges Wondering how Netflix or Spotify always seem to “get you”? That’s AI, analyzing what you’ve watched or listened to, and comparing it with millions of other users’ habits. The more you watch, the better it predicts what you’ll like. 7. Security and Smart Homes Get a Boost AI-powered home security systems can detect unusual behavior, recognize familiar faces, and even notify you of package deliveries. And yes—your smart doorbell probably uses facial recognition to know the difference between a neighbor and a stranger. The Takeaway Artificial intelligence in 2025 isn’t just about robots or futuristic tech. It’s the silent assistant making your life easier, safer, healthier, and more efficient. And as it continues to evolve, the real magic might be how effortlessly it blends into your everyday world. Stay with Readovia for more insights into how AI technology is shaping the future of everyday life.

The future of photography: how AI-generated images are redefining portraits

Artificial Intelligence (AI) is transforming the world of photography, pushing the boundaries of realism and creative expression. With AI-generated images now capable of producing hyper-realistic visuals that leave viewers questioning their authenticity, we’re entering a new era where digital creations challenge traditional photography in both artistry and accessibility. From Hyper-Realism to Unconventional Beauty AI-generated images can be designed to mimic real-life features or go beyond them, exploring new aesthetics and expressions. Recently, digital artist Moritz Stellmacher shared an AI-generated human portrait that was so realistic, it had viewers on social media guessing whether it was a photograph of a real person. This uncertainty highlights the advanced realism of AI tools today, which can blur the lines between real and artificial in ways we haven’t seen before. This new flexibility in creating faces and features might soon influence what society deems attractive. Traditionally, the modeling industry has relied on specific beauty standards like symmetry and clear skin, but AI-generated models can now embody striking, unconventional aesthetics that challenge these norms. As viewers grow accustomed to digitally crafted faces, demand for diversity and uniqueness in visual media may rise, leading to a broader representation of beauty. AI vs. Human Models: A Shift in the Modeling Industry The modeling industry is already adapting to the impact of AI. Companies can now create AI models tailored to specific advertising needs without the costs and logistics involved in traditional photography. This shift is significant in industries like e-commerce, where AI-generated images can be modified instantly to fit seasonal or demographic trends. By using AI, brands can generate a wide range of looks quickly and economically, making AI an attractive option for digital campaigns. However, human models continue to bring irreplaceable qualities like authentic emotion, unique expressions, and genuine connection, which AI models struggle to replicate fully. This blend of AI and human photography might encourage a future where both forms coexist, each serving distinct purposes and enhancing the variety of visual media. The Competitive Edge of AI: Mass Production and A/B Testing A standout advantage of AI-generated images is their ability to be mass-produced at almost no cost, a game-changer for e-commerce and online advertising. Traditional photoshoots require considerable time, expense, and coordination, while AI can produce thousands of unique images in minutes. This efficiency allows companies to quickly adapt visuals to consumer preferences, boosting engagement in competitive markets. In addition, AI facilitates large-scale A/B testing—analyzing hundreds of variations of product photos to determine which ones lead to higher conversions. With data-driven design insights, businesses can refine their marketing strategies for maximum impact, an approach that would be costly and time-consuming with conventional photography. AI-Enhanced Artistic Expression Beyond Physical Limits Unlike traditional photography, which is bound by real-world physics and environments, AI-generated images offer unlimited artistic freedom. Artists and brands can create surreal, gravity-defying visuals that defy physical laws and blend various art styles, unlocking new realms of creativity. This capacity to break conventional rules of composition and design allows for imaginative visual storytelling, helping brands and creators engage audiences in exciting new ways. AI’s Role in a Diverse Visual Landscape As AI technology becomes more integrated into the world of photography, we’re likely to see a more diverse visual landscape where AI-generated images complement human photography. While AI offers speed, scalability, and innovation, human models and photographers will continue to bring authenticity, emotion, and a personal touch to images. Together, AI and traditional photography will shape a future where beauty is more inclusive, varied, and captivating than ever before.

New AI tool generates realistic satellite images of future flooding

Visualizing the potential impacts of a hurricane on people’s homes before it hits can help residents prepare and decide whether to evacuate. MIT scientists have developed a method that generates satellite imagery from the future to depict how a region would look after a potential flooding event. The method combines a generative artificial intelligence model with a physics-based flood model to create realistic, birds-eye-view images of a region, showing where flooding is likely to occur given the strength of an oncoming storm. As a test case, the team applied the method to Houston and generated satellite images depicting what certain locations around the city would look like after a storm comparable to Hurricane Harvey, which hit the region in 2017. The team compared these generated images with actual satellite images taken of the same regions after Harvey hit. They also compared AI-generated images that did not include a physics-based flood model. The team’s physics-reinforced method generated satellite images of future flooding that were more realistic and accurate. The AI-only method, in contrast, generated images of flooding in places where flooding is not physically possible. The team’s method is a proof-of-concept, meant to demonstrate a case in which generative AI models can generate realistic, trustworthy content when paired with a physics-based model. In order to apply the method to other regions to depict flooding from future storms, it will need to be trained on many more satellite images to learn how flooding would look in other regions. “The idea is: One day, we could use this before a hurricane, where it provides an additional visualization layer for the public,” says Björn Lütjens, a postdoc in MIT’s Department of Earth, Atmospheric and Planetary Sciences, who led the research while he was a doctoral student in MIT’s Department of Aeronautics and Astronautics (AeroAstro). “One of the biggest challenges is encouraging people to evacuate when they are at risk. Maybe this could be another visualization to help increase that readiness.” To illustrate the potential of the new method, which they have dubbed the “Earth Intelligence Engine,” the team has made it available as an online resource for others to try. The researchers report their results today in the journal IEEE Transactions on Geoscience and Remote Sensing. The study’s MIT co-authors include Brandon Leshchinskiy; Aruna Sankaranarayanan; and Dava Newman, professor of AeroAstro and director of the MIT Media Lab; along with collaborators from multiple institutions. Generative adversarial images The new study is an extension of the team’s efforts to apply generative AI tools to visualize future climate scenarios. “Providing a hyper-local perspective of climate seems to be the most effective way to communicate our scientific results,” says Newman, the study’s senior author. “People relate to their own zip code, their local environment where their family and friends live. Providing local climate simulations becomes intuitive, personal, and relatable.” For this study, the authors use a conditional generative adversarial network, or GAN, a type of machine learning method that can generate realistic images using two competing, or “adversarial,” neural networks. The first “generator” network is trained on pairs of real data, such as satellite images before and after a hurricane. The second “discriminator” network is then trained to distinguish between the real satellite imagery and the one synthesized by the first network. Each network automatically improves its performance based on feedback from the other network. The idea, then, is that such an adversarial push and pull should ultimately produce synthetic images that are indistinguishable from the real thing. Nevertheless, GANs can still produce “hallucinations,” or factually incorrect features in an otherwise realistic image that shouldn’t be there. “Hallucinations can mislead viewers,” says Lütjens, who began to wonder whether such hallucinations could be avoided, such that generative AI tools can be trusted to help inform people, particularly in risk-sensitive scenarios. “We were thinking: How can we use these generative AI models in a climate-impact setting, where having trusted data sources is so important?” Flood hallucinations In their new work, the researchers considered a risk-sensitive scenario in which generative AI is tasked with creating satellite images of future flooding that could be trustworthy enough to inform decisions of how to prepare and potentially evacuate people out of harm’s way. Typically, policymakers can get an idea of where flooding might occur based on visualizations in the form of color-coded maps. These maps are the final product of a pipeline of physical models that usually begins with a hurricane track model, which then feeds into a wind model that simulates the pattern and strength of winds over a local region. This is combined with a flood or storm surge model that forecasts how wind might push any nearby body of water onto land. A hydraulic model then maps out where flooding will occur based on the local flood infrastructure and generates a visual, color-coded map of flood elevations over a particular region. “The question is: Can visualizations of satellite imagery add another level to this, that is a bit more tangible and emotionally engaging than a color-coded map of reds, yellows, and blues, while still being trustworthy?” Lütjens says. The team first tested how generative AI alone would produce satellite images of future flooding. They trained a GAN on actual satellite images taken by satellites as they passed over Houston before and after Hurricane Harvey. When they tasked the generator to produce new flood images of the same regions, they found that the images resembled typical satellite imagery, but a closer look revealed hallucinations in some images, in the form of floods where flooding should not be possible (for instance, in locations at higher elevation). To reduce hallucinations and increase the trustworthiness of the AI-generated images, the team paired the GAN with a physics-based flood model that incorporates real, physical parameters and phenomena, such as an approaching hurricane’s trajectory, storm surge, and flood patterns. With this physics-reinforced method, the team generated satellite images around Houston that depict the same flood extent, pixel by pixel, as forecasted by the flood model. “We show a tangible way to combine